Supabase Storage in Practice: File Uploads, Access Control, and CDN Acceleration

At 3 AM, I was staring at console error messages in disbelief. The user avatar upload feature had been live for just 30 minutes when users started reporting a bizarre issue: everyone’s avatar had turned into the same person’s photo.

After investigating, the culprit was clear—missing RLS Policy configuration in Storage. The bucket was public, upload paths had no user isolation, and anyone’s upload could overwrite someone else’s file. Simply put, one oversight in permission configuration had nearly caused a production incident.

Supabase Storage is easy to get started with, but mastering it—access control, CDN acceleration, image transformations—comes with plenty of pitfalls. This article shares the lessons I learned the hard way, so you don’t have to.

1. Quick Start: Standard File Uploads

Let’s start with the basics—getting a file uploaded.

Creating a Bucket

Open the Supabase console, find Storage in the left sidebar, and click “New bucket.” Give your bucket a name, like avatars for profile pictures or posts for article images. There’s an option asking “Make this bucket public?”—hold off on checking that for now; we’ll cover permissions in detail later.

My approach: private buckets for sensitive files, public buckets for static assets. Default to private, adjust as needed.

SDK Upload Code

Assuming you’ve installed @supabase/supabase-js, the code is straightforward:

import { createClient } from '@supabase/supabase-js'

const supabase = createClient(

'https://your-project.supabase.co',

'your-anon-key'

)

// Upload file

async function uploadFile(file: File) {

const filePath = `uploads/${Date.now()}-${file.name}`

const { data, error } = await supabase.storage

.from('avatars') // bucket name

.upload(filePath, file, {

cacheControl: '3600', // cache for 1 hour

upsert: false // error if file exists, don't overwrite

})

if (error) {

console.error('Upload failed:', error.message)

return null

}

return data.path // return file path

}Honestly, I’ve written this code at least ten times. The key is filePath design—we’ll cover why I use timestamp prefixes and how to implement user isolation later.

File Size Limits

Official docs say standard uploads support files up to 5GB. In practice, files under 6MB work best with standard uploads; for files over 6MB, I recommend TUS protocol resumable uploads.

What is TUS? Simply put, it supports resumable uploads for large files. If the network drops, you can resume from where you left off instead of starting over. This makes a huge difference for videos and large images—imagine a user at 90% progress when their connection drops. Without TUS, they’d have to re-upload everything.

Enabling TUS requires additional configuration. If you don’t need it right now, standard uploads handle most scenarios just fine.

// TUS upload example (recommended for large files)

const { data, error } = await supabase.storage

.from('videos')

.upload('large-video.mp4', file, {

duplex: 'half', // enable streaming upload

// TUS automatically handles resumable uploads

})2. Security Configuration: RLS Policy Deep Dive

Back to that 3 AM incident—missing permissions, files getting overwritten freely.

Supabase Storage, like the database, runs on PostgreSQL. So access control uses the same RLS (Row Level Security) approach. Think of a bucket as a table, each file as a record.

Public vs. Private Buckets

When creating a bucket, you’ll see the option: “Public bucket” or “Private bucket.”

Public bucket: Anyone can read without authentication. Great for publicly accessible assets like avatars and website logos.

Private bucket: Requires authentication to access. But here’s the catch—authentication is just the gateway. Who can read, who can write—that’s controlled by RLS Policy.

My recommendation: unless files are truly public, default to private buckets. Configuring permissions properly before opening access is far safer than trying to fix issues afterward.

Types of RLS Policies

In the Storage Policy page, you’ll see four operations:

- SELECT: Read files (download, get URL)

- INSERT: Upload new files

- UPDATE: Update/overwrite existing files

- DELETE: Delete files

Each operation can have its own Policy. The most common pattern:

-- Users can only manage their own files

CREATE POLICY "Users manage own files"

ON storage.objects FOR ALL

USING (auth.uid()::text = (storage.foldername(name))[1]);This SQL looks complex, so let’s break it down:

auth.uid()gets the current logged-in user’s IDstorage.foldername(name)extracts the first-level directory name from the file path- For example, if the path is

user123/avatar.jpg, the first-level directory isuser123

The Policy logic: users can only operate on files where the first-level directory matches their user ID. This is the core approach for user isolation.

Implementing User Isolation

How to implement this? Put the user ID in the first path level during upload:

async function uploadAvatar(userId: string, file: File) {

// Path design: userId/filename

const filePath = `${userId}/avatar-${Date.now()}.jpg`

const { data, error } = await supabase.storage

.from('avatars')

.upload(filePath, file)

return data?.path

}This way, each user’s files live in their own “folder.” RLS Policy only allows users to operate on paths starting with their ID—they can’t touch anyone else’s files.

Generating Signed URLs

Files in private buckets return 404 when accessed directly. You need to generate signed URLs:

// Generate temporary access URL (valid for 1 hour)

const { data, error } = await supabase.storage

.from('avatars')

.createSignedUrl('user123/avatar.jpg', 3600)

console.log(data?.signedUrl) // full URL with signatureSet the validity period yourself. Too long is insecure; too short hurts user experience. Generally, 1-4 hours works well.

If you want files fully public without changing bucket settings, use getPublicUrl:

const { data } = supabase.storage

.from('public-assets')

.getPublicUrl('logo.png')

// This URL doesn't need a signature, anyone can access itCommon Policy Pitfalls

Here are pitfalls I’ve encountered:

-

Forgot to configure INSERT Policy: Users can log in but can’t upload files. Error message: “new row violates row-level security policy”

-

Policy too permissive: Using

USING (true)means all files are accessible to everyone. This is the same as having no RLS at all. -

Poor path design: If the user ID isn’t in the first path level, RLS

foldernameextraction fails. I made this mistake—writing paths asuploads/user123/file.jpgextracteduploads, causing Policy validation to fail.

When configuring Policies, test in the console’s SQL editor first. Confirm the logic works before applying to production.

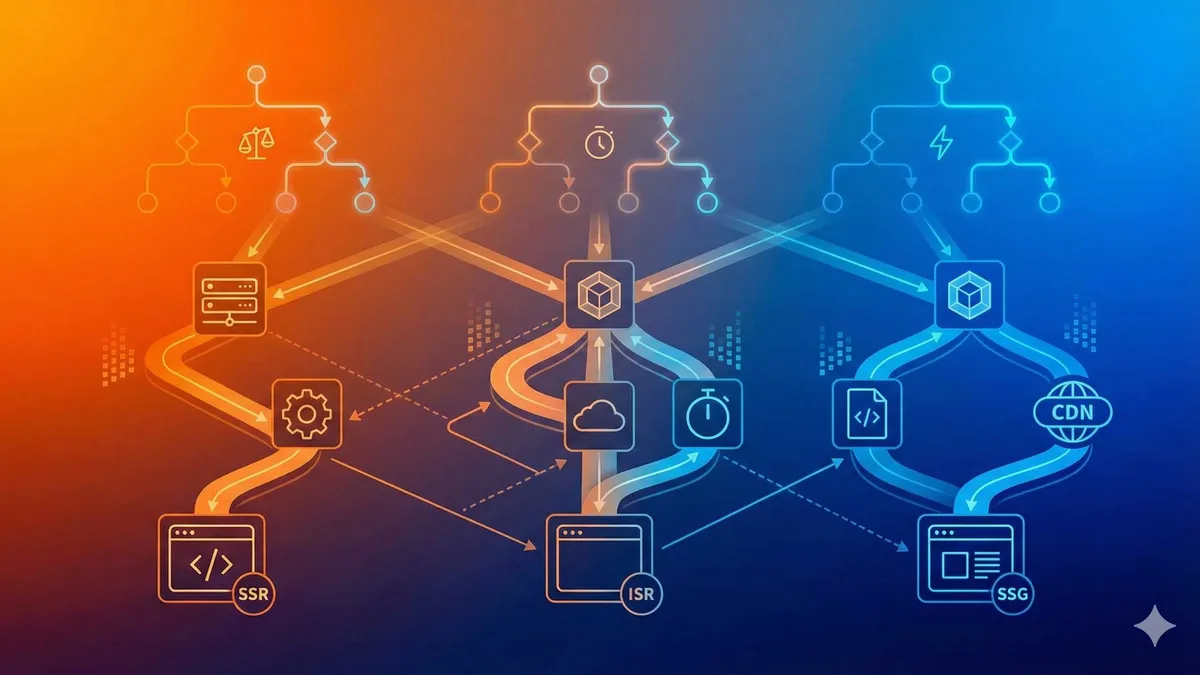

3. Performance Optimization: Smart CDN and Image Transformations

Files upload, permissions configured. Next question: how do we make files load faster?

How Smart CDN Works

Supabase’s Smart CDN isn’t your average CDN. It automatically adjusts caching strategy based on access frequency: popular files cache longer, cold files cache less.

Official docs state cache invalidation syncs globally within 60 seconds. This means if you update a file in Tokyo, users in New York see the latest version within 60 seconds. Much faster than traditional CDNs that can take minutes or hours.

But Smart CDN is a paid feature requiring Pro Plan ($25/month). Free Plan users can still access files—just without CDN acceleration, served directly from Supabase’s servers.

Image Transformation Parameters

This feature I love—no need to handle image resizing or cropping yourself. Just add parameters to the URL.

Basic parameters:

?width=300&height=200 // specify dimensions

?resize=contain // maintain aspect ratio, no crop

?resize=cover // fill dimensions, crop excess

?quality=80 // image quality (1-100)

?format=webp // convert to WebP, smaller sizeCombined usage:

const baseUrl = supabase.storage

.from('avatars')

.getPublicUrl('user123/avatar.jpg').data.publicUrl

// Generate thumbnail

const thumbnailUrl = `${baseUrl}?width=100&height=100&resize=cover`Image transformation limits:

- Dimension range: 1 to 2500 pixels

- Original file size: maximum 25MB

- Supported formats: JPEG, PNG, WebP, GIF, AVIF

Exceeding limits throws an error. I once tried to resize a 30MB original—got rejected immediately.

Pricing: Free Tier per Project

Image transformations are billed per transformation count, not storage size.

Each project gets 100 free image transformations per month. After that, $5 per 1,000 transformations.

Honestly, for personal projects or small teams, 100 is plenty. My blog project transforms maybe a few dozen avatars and images monthly. Unless you’re building an Instagram-like image social app, this cost shouldn’t be a concern.

Next.js Integration: Image Loader

If you use Next.js, you can configure Supabase’s Image Loader to let next/image handle transformations automatically:

// next.config.js

module.exports = {

images: {

loader: 'custom',

loaderFile: './supabase-image-loader.js',

}

}Then write the loader file:

// supabase-image-loader.js

export default function supabaseLoader({ src, width, quality }) {

const params = new URLSearchParams()

params.set('width', width.toString())

params.set('quality', (quality || 75).toString())

params.set('format', 'webp')

return `${src}?${params.toString()}`

}Now using <Image src="..." width={300} /> in Next.js automatically adds transformation parameters.

Pro Plan Threshold

The Smart CDN and image transformations mentioned earlier both require Pro Plan. Free Plan users only get basic upload and download functionality.

Should you upgrade? It depends on your project. For storing a few avatars, Free Plan is plenty. But for handling lots of images with performance optimization, Pro Plan’s CDN acceleration and image transformations can save significant development time—no need to build your own CDN or image processing service.

My approach: start with Free Plan during development, upgrade to Pro once traffic stabilizes. $25/month isn’t trivial.

4. Real-World Example: Complete Blog Project Configuration

Enough theory—let’s look at a complete example. Here’s my blog project’s Storage configuration, from zero to production.

Scenario: User Avatars + Article Images

Two buckets needed:

avatars: User profile pictures, private bucket, users can only manage their own avatarspost-images: Article images, private bucket, authors can upload, anyone can read (using signed URLs)

Step 1: Create Buckets

Console operations:

- Storage > New bucket > enter

avatarsas name, check Private - Create

post-imagesthe same way

Step 2: Configure RLS Policies

Policies for avatars bucket:

-- Allow users to read all avatars (public read)

CREATE POLICY "Anyone can view avatars"

ON storage.objects FOR SELECT

USING (bucket_id = 'avatars');

-- Users can only upload and update their own avatar

CREATE POLICY "Users manage own avatar"

ON storage.objects FOR INSERT

WITH CHECK (bucket_id = 'avatars' AND auth.uid()::text = (storage.foldername(name))[1]);

-- Users can only delete their own avatar

CREATE POLICY "Users delete own avatar"

ON storage.objects FOR DELETE

USING (bucket_id = 'avatars' AND auth.uid()::text = (storage.foldername(name))[1]);Policies for post-images bucket:

-- Authors can upload article images (assuming authors have author role)

CREATE POLICY "Authors can upload post images"

ON storage.objects FOR INSERT

WITH CHECK (

bucket_id = 'post-images'

AND auth.jwt() ->> 'role' = 'author'

);

-- Everyone can read article images

CREATE POLICY "Public read post images"

ON storage.objects FOR SELECT

USING (bucket_id = 'post-images');Step 3: Frontend Upload Code

Avatar upload component:

async function handleAvatarUpload(file: File) {

const user = await supabase.auth.getUser()

if (!user.data.user) return alert('Please log in first')

// Path: userId/avatar.jpg (fixed filename, each upload overwrites old avatar)

const filePath = `${user.data.user.id}/avatar.jpg`

const { error } = await supabase.storage

.from('avatars')

.upload(filePath, file, { upsert: true })

if (!error) {

// Get public URL (since SELECT Policy allows all reads)

const url = supabase.storage.from('avatars').getPublicUrl(filePath)

setUserAvatar(url.data.publicUrl)

}

}Article image upload:

async function handlePostImageUpload(file: File) {

const filePath = `posts/${Date.now()}-${file.name}`

const { data, error } = await supabase.storage

.from('post-images')

.upload(filePath, file)

if (!error) {

// Generate signed URL, valid for 24 hours

const { data: urlData } = await supabase.storage

.from('post-images')

.createSignedUrl(filePath, 86400)

insertImageToEditor(urlData?.signedUrl)

}

}Step 4: Test Verification

Before going live, verify these key points:

- Can unauthenticated users see article images? (Should work—SELECT Policy allows it)

- Can regular users upload article images? (Should fail—only author role allowed)

- Can User A overwrite User B’s avatar? (Should fail—path isolation)

Test each scenario to confirm Policies are correctly configured. That 3 AM lesson—I don’t want to experience it again.

Summary

Supabase Storage comes down to three core things: upload, permissions, acceleration.

Upload is simplest—a few lines of code. But permissions need careful attention—RLS Policy isn’t a one-time setup, it requires testing against your business scenarios. CDN and image transformations are nice-to-haves, available with Pro Plan, and they do save development time.

My experience: get upload and permissions working first, ensure no security incidents. Add CDN later when performance becomes a concern, add transformations when image processing needs arise. Take it step by step, don’t try to do everything at once.

If you’re using Supabase Storage too, I’d love to hear about your experiences. That 3 AM incident of mine—I’m sure I’m not the only one who’s been there.

Complete Supabase Storage Configuration Workflow

From creating buckets to configuring permissions to CDN acceleration—full hands-on guide

⏱️ Estimated time: 30 min

- 1

Step1: Create Bucket

Create a private bucket in Supabase console:

• Go to Storage > New bucket

• Enter name (e.g., avatars)

• Check Private (recommended default)

• Click Create bucket - 2

Step2: Configure RLS Policy

Set up Row Level Security for the bucket:

• Go to Storage > Select bucket > Policies

• Click New Policy

• Choose operation type (SELECT/INSERT/UPDATE/DELETE)

• Write Policy rules (e.g., user isolation)

• Test before applying to production - 3

Step3: Upload Files

Upload files using SDK:

• Design path structure (e.g., userId/filename)

• Call storage.from().upload()

• Set cacheControl and upsert parameters

• Handle upload errors and return path - 4

Step4: Configure CDN and Image Transformations (Optional)

Pro Plan unlocks advanced features:

• Smart CDN auto-caches popular files

• Image transformation URL parameters (width/height/format)

• Next.js Image Loader integration

• Monitor free quota (100 images/month)

FAQ

What's the difference between Public and Private buckets?

How do I configure RLS Policy for user isolation?

• Design upload path as userId/filename

• Policy uses auth.uid()::text = (storage.foldername(name))[1]

• This ensures users can only operate on paths starting with their ID

How do I share files from a private bucket?

What are the file upload limitations?

What image transformation parameters and limits are supported?

• width/height: dimensions (range 1-2500 pixels)

• resize: contain (maintain aspect ratio) or cover (crop to fill)

• quality: quality level (1-100)

• format: webp/jpeg/png/gif/avif

Limit: original file must not exceed 25MB

Do Smart CDN and image transformations require payment?

9 min read · Published on: Apr 9, 2026 · Modified on: Apr 9, 2026

Related Posts

Docker Compose Multi-Service Orchestration: One-Command Local Development Setup

Docker Compose Multi-Service Orchestration: One-Command Local Development Setup

n8n Advanced Practice: Webhook Triggers and IF/Switch Conditional Branching Design

n8n Advanced Practice: Webhook Triggers and IF/Switch Conditional Branching Design

GitHub Actions Matrix Build: Multi-Version Parallel Testing in Practice

Comments

Sign in with GitHub to leave a comment