GitHub Actions Deployment Strategies: From VPS to Cloud Platforms CD Pipeline

It’s 3 AM, and I’m staring at the GitHub Actions log interface, watching red errors scroll up line by line. “Host key verification failed.” SSH issues again.

This is already the fifth deployment failure. Everything passes locally, but explodes once pushed to GitHub. At that moment, I really wanted to curse—but I also realized something: the choice of deployment strategy is far more complex than I thought.

Whether it’s a self-managed VPS or hosting platforms like Vercel and Cloudflare Pages, every solution has its pitfalls. Choose wrong, and late-night debugging sessions will only increase.

This article explores several GitHub Actions deployment strategies to help you find the path that suits you best.

VPS SSH Deployment: Old School but Reliable

To be honest, I initially resisted VPS deployment. It seemed too troublesome—SSH keys, known_hosts, rsync parameters… a bunch of things to configure.

But after stepping through several pitfalls, I found this “old school” approach to be the most controllable.

SSH Key Configuration: Don’t Hardcode

The most common question is where to put SSH keys.

Beginners often write the private key directly into the workflow file. Big mistake. GitHub Secrets is the right place.

Go to repository Settings → Secrets → Actions, add SSH_PRIVATE_KEY. Then use it in your workflow like this:

- name: Setup SSH

uses: webfactory/ssh-agent-action@v0.7.0

with:

ssh-private-key: ${{ secrets.SSH_PRIVATE_KEY }}This action automatically starts ssh-agent and loads your key. Hassle-free.

known_hosts: Avoiding “Host key verification failed”

When SSH connects to a server for the first time, it asks if you trust this host. Interactive prompts can’t be handled in a CI environment, so you need to add the server fingerprint to known_hosts beforehand.

Two approaches:

Method 1: Use an action to add automatically

- name: Add server to known hosts

uses: webfactory/ssh-agent-action@v0.7.0

with:

ssh-private-key: ${{ secrets.SSH_PRIVATE_KEY }}

known-hosts: ${{ secrets.SSH_KNOWN_HOSTS }}Get SSH_KNOWN_HOSTS content like this:

ssh-keyscan -H your-server.com >> known_hosts.txt

# Copy file contents to GitHub SecretsMethod 2: Manual configuration

- name: Add server to known hosts

run: |

mkdir -p ~/.ssh

ssh-keyscan -H ${{ secrets.SERVER_IP }} >> ~/.ssh/known_hostsMethod 1 is cleaner; Method 2 is better for quick debugging.

rsync or scp?

For deployment file transfer, I use rsync. The reasons are simple:

- Only transfers changed files, saving time

- Can exclude specific directories (like node_modules)

- Supports incremental sync

A typical rsync command:

- name: Deploy to server

run: |

rsync -avz --delete \

--exclude 'node_modules' \

--exclude '.git' \

./dist/ ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_IP }}:/var/www/html/The --delete parameter removes files in the destination that don’t exist in the source. Use carefully—you might delete things you shouldn’t.

Post-deployment Commands: Restarting Services

Static websites are done once files are transferred. But if you’re deploying a Node.js application, you need to restart the service.

I like using PM2 to manage Node processes. After deployment, execute:

- name: Restart application

run: |

ssh ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_IP }} \

"cd /var/www/app && pm2 restart all"Or a safer approach—restart a specific application:

- name: Restart application

run: |

ssh ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_IP }} \

"pm2 restart my-app --update-env"--update-env reloads environment variables, suitable when configuration has changed.

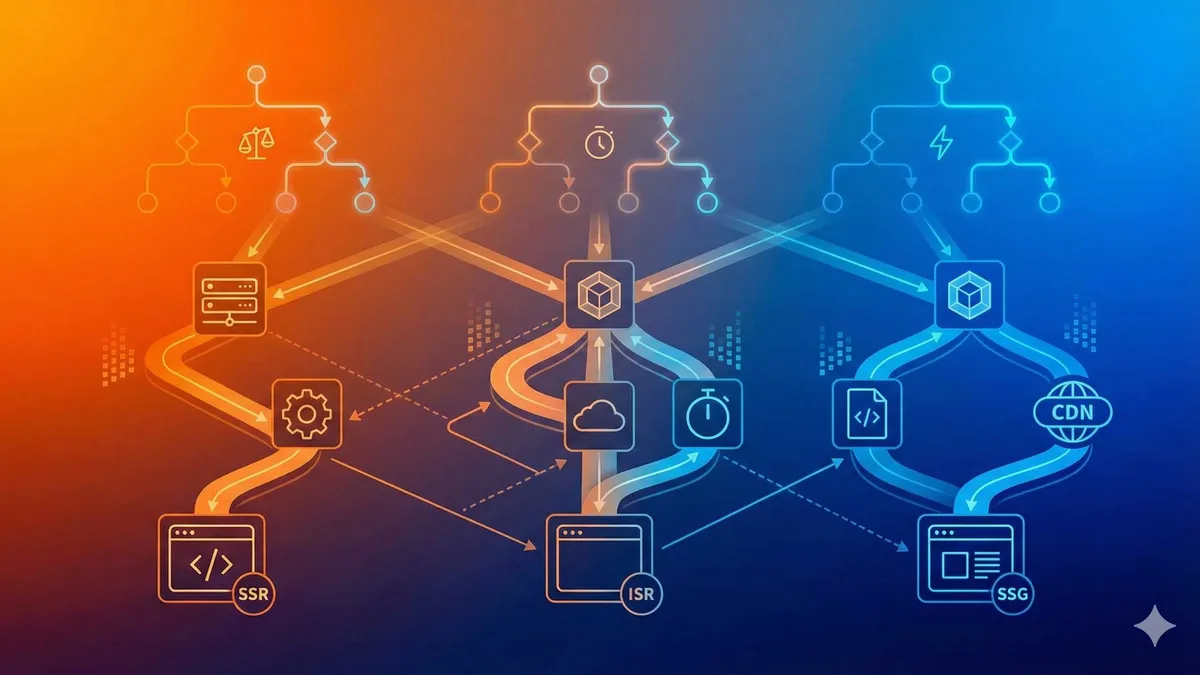

Cloud Platform Deployment: The Convenience of Managed Services

The problem with VPS deployment is—you have to manage the server yourself. Security patches, SSL certificate renewals, firewall rules… lots of琐事 (trivial matters).

Managed platforms are much easier. Push code, auto-build, auto-deploy. You just write code.

Vercel: First Choice for Frontend Projects

Vercel’s support for frontend projects is nearly perfect. Next.js, Astro, React—one-click deployment, zero configuration.

But if your project needs a backend API, pay attention. Vercel’s Serverless Functions have runtime limits (10 seconds for free tier, 60 seconds for Pro). Exceed that and you’ll get a timeout.

For pure static sites or simple APIs, Vercel is sufficient. Complex backend services still need your own management.

GitHub Actions deployment to Vercel configuration:

name: Deploy to Vercel

on:

push:

branches: [main]

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Install Vercel CLI

run: npm i -g vercel@latest

- name: Pull Vercel Environment Information

run: vercel pull --yes --environment=production --token=${{ secrets.VERCEL_TOKEN }}

- name: Build Project Artifacts

run: vercel build --prod --token=${{ secrets.VERCEL_TOKEN }}

- name: Deploy Project Artifacts to Vercel

run: vercel deploy --prebuilt --prod --token=${{ secrets.VERCEL_TOKEN }}Generate VERCEL_TOKEN from the Vercel console and store it in GitHub Secrets.

Cloudflare Pages: Generous Free Tier

Cloudflare Pages has a much more generous free tier than Vercel. Unlimited bandwidth, 500 builds per month—more than enough for personal projects.

And Cloudflare’s global CDN is really fast. I’ve tested it myself; access speeds in Asia are more stable than Vercel.

Deployment configuration:

name: Deploy to Cloudflare Pages

on:

push:

branches: [main]

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Build

run: npm run build

- name: Deploy

uses: cloudflare/pages-action@v1

with:

apiToken: ${{ secrets.CLOUDFLARE_API_TOKEN }}

accountId: ${{ secrets.CLOUDFLARE_ACCOUNT_ID }}

projectName: my-project

directory: distAnother benefit of Cloudflare—R2 storage also has generous free tiers. Static assets can go to R2, combined with Pages CDN, loading speeds can be much faster.

Netlify: The Veteran Stable Choice

I use Netlify less than the first two, but it’s a veteran hosting platform with a mature ecosystem.

Deployment configuration is similar:

- name: Deploy to Netlify

uses: netlify/actions/cli@master

with:

args: deploy --prod

env:

NETLIFY_AUTH_TOKEN: ${{ secrets.NETLIFY_AUTH_TOKEN }}

NETLIFY_SITE_ID: ${{ secrets.NETLIFY_SITE_ID }}Netlify’s Form handling feature is quite practical—automatic form submission processing, suitable for simple marketing pages.

Limitations of Managed Platforms

That said, managed platforms aren’t万能 (all-powerful).

A few common limitations:

- Constrained build environment: Memory and CPU have limits; large projects may fail to build

- Low customization: Want to modify nginx configuration? No way

- Platform dependency: If the platform shuts down or changes policies, you have to migrate

- Access issues in China: Some platforms have unstable access in China (though Cloudflare has improved)

If your project needs complete control, you’ll still have to go back to VPS.

Hybrid Strategy: Balancing Flexibility and Control

Many projects aren’t “pure static” or “pure backend.” Frontend is Next.js, backend needs to connect to database, run cron jobs…

In this case, hybrid deployment might be the optimal solution.

Static Page Hosting + API Deployment to VPS

A typical architecture:

- Static pages (HTML/CSS/JS) deployed to Cloudflare Pages or Vercel

- Node.js API service deployed to your own VPS

- Database also on VPS (or use Supabase/PlanetScale hosting)

Benefits of each taking what they’re good at:

- Frontend enjoys CDN acceleration and automatic HTTPS

- Backend has complete control,不受托管平台限制 (not limited by hosting platform)

- Low database access latency (API and database on same machine)

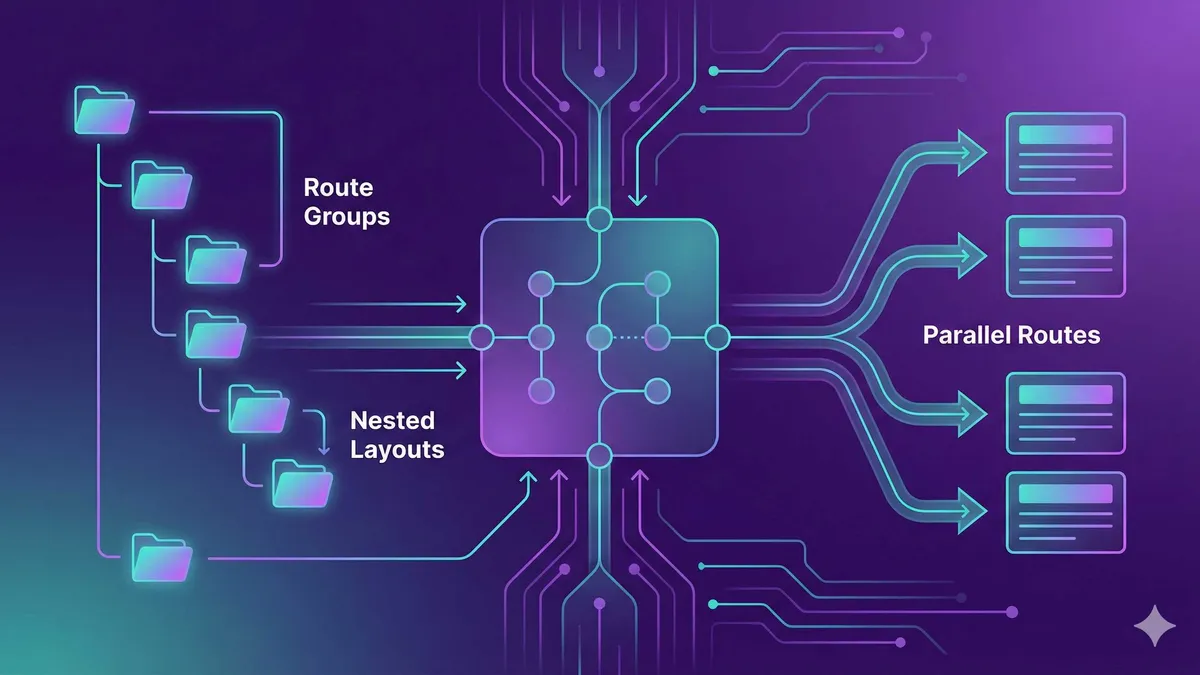

Multi-stage Deployment with GitHub Actions

Use one workflow to deploy to two places simultaneously:

name: Hybrid Deploy

on:

push:

branches: [main]

jobs:

build:

runs-on: ubuntu-latest

outputs:

artifact-path: ./dist

steps:

- uses: actions/checkout@v4

- name: Install dependencies

run: npm ci

- name: Build

run: npm run build

- name: Upload artifact

uses: actions/upload-artifact@v4

with:

name: build-output

path: dist

deploy-frontend:

needs: build

runs-on: ubuntu-latest

steps:

- name: Download artifact

uses: actions/download-artifact@v4

with:

name: build-output

path: dist

- name: Deploy to Cloudflare Pages

uses: cloudflare/pages-action@v1

with:

apiToken: ${{ secrets.CLOUDFLARE_API_TOKEN }}

accountId: ${{ secrets.CLOUDFLARE_ACCOUNT_ID }}

projectName: my-frontend

directory: dist

deploy-backend:

needs: build

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Setup SSH

uses: webfactory/ssh-agent-action@v0.7.0

with:

ssh-private-key: ${{ secrets.SSH_PRIVATE_KEY }}

- name: Deploy API to VPS

run: |

rsync -avz --delete \

--exclude 'node_modules' \

./api/ ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_IP }}:/var/www/api/

- name: Restart API service

run: |

ssh ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_IP }} \

"cd /var/www/api && npm install && pm2 restart api"This workflow has three jobs:

build: Build project, produce static filesdeploy-frontend: Deploy static files to Cloudflare Pagesdeploy-backend: Deploy API to VPS and restart service

needs: build ensures deployment jobs only execute after build completes. upload-artifact and download-artifact pass build artifacts between jobs.

Environment Variable Separation

A challenge with hybrid deployment: frontend and backend have different environment variables.

Frontend needs to know the API address; backend needs to know the database password.

My approach:

# Frontend job

- name: Set frontend env

run: |

echo "API_URL=https://api.mydomain.com" >> $GITHUB_ENV

# Backend job

- name: Deploy with env

run: |

ssh ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_IP }} \

"cd /var/www/api && pm2 restart api --update-env DATABASE_URL=${{ secrets.DATABASE_URL }}"Sensitive information (database passwords, API tokens) always goes through GitHub Secrets. Non-sensitive information (API addresses) can be written in the workflow.

Practical Configuration Example

Below is a complete VPS deployment workflow, covering all the points mentioned earlier.

Complete Workflow File

name: Deploy to VPS

on:

push:

branches: [main]

workflow_dispatch: # Manual deployment trigger

env:

NODE_VERSION: '20'

jobs:

test:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Setup Node.js

uses: actions/setup-node@v4

with:

node-version: ${{ env.NODE_VERSION }}

cache: 'npm'

- name: Install dependencies

run: npm ci

- name: Run tests

run: npm test

build:

needs: test

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Setup Node.js

uses: actions/setup-node@v4

with:

node-version: ${{ env.NODE_VERSION }}

cache: 'npm'

- name: Install dependencies

run: npm ci

- name: Build

run: npm run build

- name: Upload build artifact

uses: actions/upload-artifact@v4

with:

name: dist

path: dist

retention-days: 1

deploy:

needs: build

runs-on: ubuntu-latest

steps:

- name: Download build artifact

uses: actions/download-artifact@v4

with:

name: dist

path: dist

- name: Setup SSH

uses: webfactory/ssh-agent-action@v0.7.0

with:

ssh-private-key: ${{ secrets.SSH_PRIVATE_KEY }}

- name: Add server to known hosts

run: |

mkdir -p ~/.ssh

ssh-keyscan -H ${{ secrets.SERVER_HOST }} >> ~/.ssh/known_hosts

- name: Deploy files

run: |

rsync -avz --delete \

--exclude '.htaccess' \

./dist/ ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_HOST }}:${{ secrets.DEPLOY_PATH }}

- name: Verify deployment

run: |

ssh ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_HOST }} \

"ls -la ${{ secrets.DEPLOY_PATH }}"

- name: Send deployment notification

if: always()

run: |

curl -X POST "${{ secrets.NOTIFICATION_WEBHOOK }}" \

-H "Content-Type: application/json" \

-d '{"text": "Deployment completed: ${GITHUB_SHA}"}'Required Secrets Configuration

| Secret Name | Description | How to Obtain |

|---|---|---|

SSH_PRIVATE_KEY | SSH private key content | Generate locally, put public key on server |

SERVER_HOST | Server IP or domain | Your VPS information |

SERVER_USER | SSH login username | Usually root or ubuntu |

DEPLOY_PATH | Deployment target path | Like /var/www/html |

NOTIFICATION_WEBHOOK | Deployment notification address | Slack/Telegram webhook |

Troubleshooting Common Issues

When deployment fails, looking at logs can easily be confusing—too much information.

My troubleshooting sequence:

- SSH connection issues: Check “Setup SSH” and “Add server to known hosts” steps

- If failed, check key format, known_hosts content

- rsync transfer issues: Check “Deploy files” step

- If failed, check if path exists, if permissions are correct

- Service restart issues: Check “Verify deployment” step

- If failed, check if files exist in target path

A tip: Add debug output after failed steps.

- name: Debug SSH connection

if: failure()

run: |

echo "SSH config:"

cat ~/.ssh/config || echo "No config file"

echo "Known hosts:"

cat ~/.ssh/known_hosts || echo "No known_hosts file"

ssh -v ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_HOST }} echo "Connection test"ssh -v outputs detailed logs, showing where the problem is.

Summary

After writing all this, it really comes down to one sentence: there’s no perfect deployment solution, only the solution that best fits your project.

Selection recommendations:

- Pure static sites (blogs, documentation): Cloudflare Pages or Vercel, hassle-free

- Simple API + frontend: Managed platforms are sufficient, don’t折腾 (struggle with) VPS

- Complex backend + database: VPS or cloud server, control is important

- Hybrid architecture: Frontend hosting + backend VPS, each taking their strengths

Whichever you choose, GitHub Actions configuration patterns are similar: build → transfer → restart. Break these three steps down clearly, and you won’t get lost during debugging.

One more thing: don’t panic when deployment fails. Read logs section by section, first定位 (locate) whether it’s an SSH connection issue or command execution issue. Add a debug step, and the problem will quickly reveal itself.

Next time deployment fails at 3 AM, I hope you can find the reason faster.

Configure GitHub Actions VPS Deployment Pipeline

Configure GitHub Actions automatic deployment to VPS from scratch, including SSH keys, rsync transfer, and service restart

⏱️ Estimated time: 30 min

- 1

Step1: Generate SSH Key Pair

Generate SSH key specifically for GitHub Actions locally:

```bash

ssh-keygen -t ed25519 -C "github-actions" -f github-actions-key

```

This generates two files:

- github-actions-key (private key) → Add to GitHub Secrets

- github-actions-key.pub (public key) → Add to server ~/.ssh/authorized_keys - 2

Step2: Configure GitHub Secrets

Go to repository Settings → Secrets → Actions, add the following Secrets:

- SSH_PRIVATE_KEY: Complete private key file content (including BEGIN/END lines)

- SERVER_HOST: Server IP or domain

- SERVER_USER: SSH login username (like root or ubuntu)

- DEPLOY_PATH: Deployment target path (like /var/www/html)

Sensitive information must never be written in the workflow file. - 3

Step3: Create Workflow File

Create deployment configuration in .github/workflows/deploy.yml:

```yaml

name: Deploy to VPS

on:

push:

branches: [main]

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Setup SSH

uses: webfactory/ssh-agent-action@v0.7.0

with:

ssh-private-key: ${{ secrets.SSH_PRIVATE_KEY }}

- name: Add server to known hosts

run: ssh-keyscan -H ${{ secrets.SERVER_HOST }} >> ~/.ssh/known_hosts

``` - 4

Step4: Configure File Transfer

Use rsync to transfer build artifacts:

```yaml

- name: Deploy files

run: |

rsync -avz --delete --exclude 'node_modules' --exclude '.git' ./dist/ ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_HOST }}:${{ secrets.DEPLOY_PATH }}

```

Key parameter explanation:

- -avz: Archive mode, verbose output, compressed transfer

- --delete: Delete extra files at destination

- --exclude: Exclude directories not needed for transfer - 5

Step5: Add Post-deployment Commands

Node.js applications need to restart services:

```yaml

- name: Restart application

run: |

ssh ${{ secrets.SERVER_USER }}@${{ secrets.SERVER_HOST }} "cd /var/www/app && pm2 restart all --update-env"

```

--update-env parameter reloads environment variables, suitable for deployments with configuration changes.

FAQ

What to do when GitHub Actions deployment to VPS fails SSH connection?

1. Check private key format: Ensure private key in GitHub Secrets includes complete BEGIN/END lines

2. Check public key configuration: Public key must be added to server ~/.ssh/authorized_keys file

3. Check known_hosts: Use ssh-keyscan command to add server fingerprint in advance

4. Add debug step: Use ssh -v to output detailed logs to locate the problem

What's the difference between rsync and scp? Which should I use for deployment?

- Incremental transfer: Only transfers changed files, saves bandwidth and time

- Exclude functionality: Can skip directories like node_modules

- Delete synchronization: --delete parameter can remove extra files at destination

- Resume from breakpoint: Can continue from breakpoint after network interruption

scp is suitable for one-time transfer of small amounts of files.

How to choose between managed platforms (Vercel/Cloudflare Pages) and VPS?

- Pure static sites: Choose managed platforms, simple configuration, CDN acceleration

- Simple API: Managed platforms are sufficient, note runtime limits (Vercel free tier 10 seconds)

- Complex backend: Choose VPS, have complete control, not limited by platform

- Hybrid architecture: Frontend hosting + backend VPS, each taking their strengths

How to use environment variables from GitHub Secrets on the server?

Method 1: Pass via SSH command

```yaml

ssh user@host "pm2 restart app --update-env DB_URL=${{ secrets.DB_URL }}"

```

Method 2: Use .env file on server

```yaml

- name: Update .env

run: |

ssh user@host "echo 'DB_URL=${{ secrets.DB_URL }}' > /var/www/app/.env"

```

I recommend Method 2, safer and easier to manage.

How to quickly locate problems when deployment fails?

1. SSH connection issues: Check logs from Setup SSH and known_hosts steps

2. File transfer issues: Check Deploy files step, verify path and permissions

3. Service startup issues: Check restart command output

Add debug step to output detailed information:

```yaml

- name: Debug

if: failure()

run: ssh -v user@host "ls -la /deploy/path"

```

How to implement hybrid deployment (frontend hosting + backend VPS)?

1. build job: Build project, save artifacts with upload-artifact

2. deploy-frontend job: Download artifacts, deploy to hosting platform

3. deploy-backend job: SSH to VPS to deploy backend service

Key points:

- Use needs to control execution order

- Use artifacts to pass files between jobs

- Manage frontend and backend environment variables separately

8 min read · Published on: Apr 7, 2026 · Modified on: Apr 8, 2026

Related Posts

GitHub Actions Matrix Build: Multi-Version Parallel Testing in Practice

GitHub Actions Matrix Build: Multi-Version Parallel Testing in Practice

Supabase Auth in Practice: Email Verification, OAuth & Session Management

Supabase Auth in Practice: Email Verification, OAuth & Session Management

GitHub Actions Cache Strategy: Speed Up CI/CD Pipeline 5x

Comments

Sign in with GitHub to leave a comment