Self-Evolving AI: Key Technical Paths for Continuous Model Learning

Introduction

At 3 AM, Claude was still discussing the feasibility of this technical solution with me.

This was the fifth round of conversation. It remembered the performance bottleneck I mentioned earlier, the three optimization approaches we tried, and even that offhand comment I made: “I feel like we can squeeze out a bit more performance.”

I realized something: this AI was “evolving.” Not the technical parameter updates, but it was increasingly understanding my thought patterns and anticipating my needs.

This got me thinking about a question: Can AI not just “learn” knowledge灌输 by humans, but continuously learn and self-evolve like humans do?

When AI Meets the “Peak at Launch” Dilemma

Let’s be honest, today’s large models face an awkward reality.

Whether it’s GPT-5 or Claude 3.5, no matter how many parameters or capabilities they have, they’re essentially “peak at launch” products. The moment model training completes, its knowledge is frozen.

A model trained in 2023 can’t understand news from 2025. Even if you feed it the latest articles, it’s just “open-book testing”—not truly “learning” anything new.

Richard Sutton, the father of reinforcement learning, made a sharp criticism: current large language models are just “frozen past knowledge,” lacking the ability to learn in real-time through environmental interaction.

It’s like a PhD stranded on a deserted island—amazing knowledge reserves, but facing the entirely new challenges of jungle survival, can neither learn new survival skills nor create new tools.

More critically, once you try to make the model learn new knowledge, it “forgets” old knowledge. This phenomenon has an academic name: Catastrophic Forgetting.

Sounds fancy, but simply put: the model learns new knowledge and loses old knowledge. Like learning Python and forgetting JavaScript, learning Japanese and forgetting English.

This is why self-evolving AI became a tech hotspot in 2025. Because everyone working on AI is thinking about the same question:

How can models learn new things without forgetting old things?

What Exactly Is Self-Evolving AI Evolving?

Before diving into technical solutions, let’s clarify one question: What exactly is evolving?

According to that survey paper jointly published by 16 teams including Princeton and Tsinghua, self-evolving agent growth can be broken down into four dimensions.

First dimension: Model-level evolution. This is easiest to understand—changing model parameter weights. Like human brain neural synapses reconnecting to form new memories and skills.

Second dimension: Context evolution. This is more interesting. The model itself doesn’t change, but accumulates experience through memory systems. Like you might not remember that formula from three years ago, but you know which book to find it in.

Third dimension: Tool evolution. Learning to use new tools, even creating new tools. Like humanity’s leap from using stones to building robots.

Fourth dimension: Architecture evolution. This is the most hardcore—changing the model’s structural design. Like the human brain evolving from reptile to mammal, gaining a neocortex.

These four dimensions aren’t isolated but evolve together. Let’s focus on the first three, as they already have substantial practical results.

Model-Level Evolution: Balancing “Learning New” and “Forgetting Old”

Technical Paths for Continuous Learning

For models to continuously learn, the most direct method is continual fine-tuning.

But there’s a paradox: model parameters are limited, so learning new tasks will inevitably squeeze out old task knowledge. Like your bookshelf has limited space—adding new books means removing old ones.

So researchers came up with three solution directions.

Direction 1: Protect Important Parameters

Elastic Weight Consolidation (EWC) is a clever approach. The core idea: calculate each parameter’s importance to old tasks, and try not to touch those important parameters when learning new tasks.

It’s like putting “must keep” labels on classic books on your shelf, avoiding them when adding new books.

Direction 2: Experience Replay

This method is more straightforward: store some historical data, mix it in when training on new tasks.

The problem is storage cost. A pre-trained model might have seen trillions of tokens—you can’t store them all. So the practical approach is selective storage—only keeping the most critical samples.

Direction 3: Dynamic Architecture Expansion

Add new model capacity for new tasks. Like LoRA (Low-Rank Adaptation) technology, freezing original model parameters and only training newly added small modules.

It’s like putting a small shelf next to your bookshelf—new books go on the small shelf, not touching the original books.

Real-World Case: Agent0’s Self-Training

A team at Washington University in St. Louis did an interesting experiment called Agent0.

They designed a dual-agent system: a curriculum agent responsible for generating problems, and an execution agent responsible for solving them. Both evolve through self-play.

Interestingly, although only trained on math problems, the model’s general reasoning ability improved by 24%. On the MMLU-Pro benchmark, accuracy rose from 51.8% to 63.4%.

What does this show? Multi-step reasoning abilities cultivated through tool assistance can transfer to other domains.

Like the logical thinking you develop through learning math can help you write clearer code.

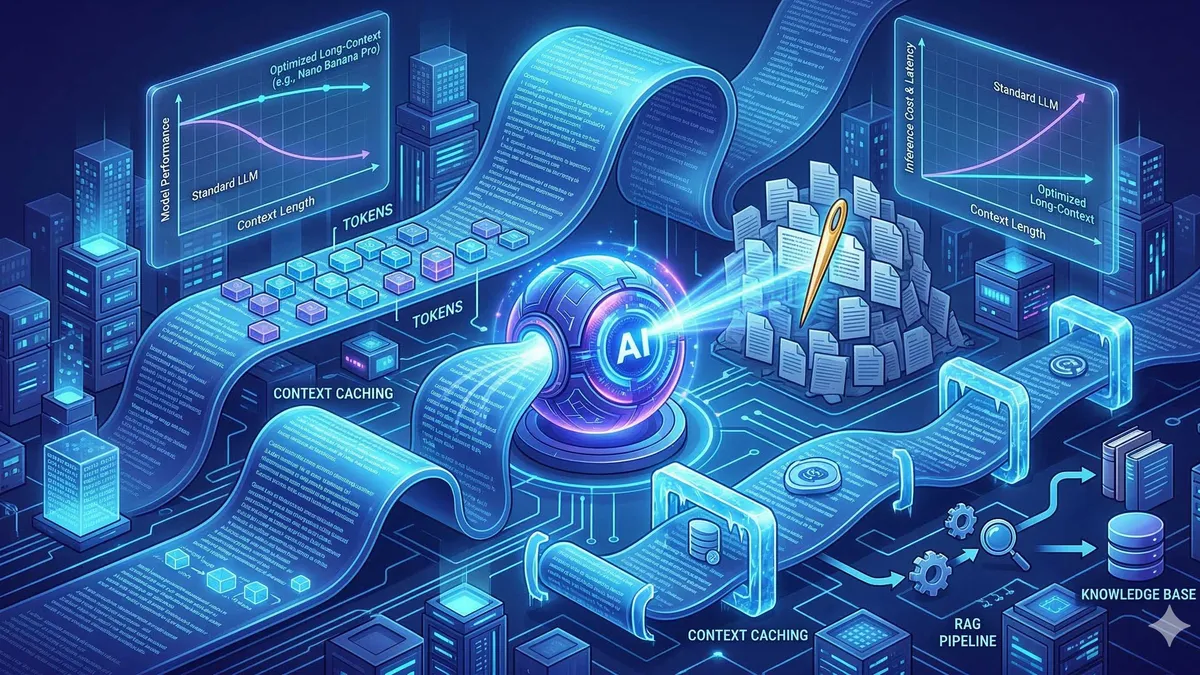

Context Evolution: The Rise of Memory Systems

Model-level evolution has a hard limitation: every update requires changing parameters, which is costly and risky.

In-Context Learning offers another path: don’t modify model parameters, but let the model “temporarily learn” new knowledge through context windows.

You’ve probably noticed: give ChatGPT a few examples, and it can output in your requested format. That’s In-Context Learning at work.

But traditional in-context learning has a limitation—it forgets when the session ends. Next conversation, you have to teach it all over again.

Breakthrough in Persistent Memory Systems

An important development in 2025: mainstream models started configuring persistent memory systems.

Frameworks like Mem0 and Second Me enable models to remember user preferences, historical conversations, and common instructions across sessions.

Honor’s YOYO agent is a typical example. Within three months, it expanded scenario coverage from 200 to 3000.

How?

The core mechanism: data generated from each user interaction is converted to vectors and stored in a database. Next conversation, the model retrieves relevant historical memories and processes them together with current input.

It’s like having an assistant who never forgets, remembering all your work habits and preferences.

Vector Databases: The Physical Carrier of Memory

Speaking of memory systems, we have to mention vector databases. This is one of the hottest components in the 2024-2025 AI tech stack.

The principle isn’t actually complicated: convert text, images, and audio into high-dimensional vectors and store them in a database. When querying, also convert to vectors and find the most similar stored vectors.

Sounds abstract? Think about how humans remember things.

You don’t remember someone’s exact appearance, but rather feature vectors like “tall, wears glasses, somewhat rough voice.” Next meeting, you recognize them through these features.

Vector databases are simulating this process.

Products like Cloudflare Vectorize, Pinecone, and Milvus are essentially solving the same problem: how to efficiently store and retrieve massive amounts of memory.

Meta-Learning: Learning How to Learn

The continuous learning we just discussed has a core question: “how to learn new knowledge.” But there’s an even deeper question:

How to make models learn learning itself?

Richard Sutton, the father of reinforcement learning, proposed the “meta-method” theory with a core viewpoint:

Don’t freeze knowledge in the model, but encode “the ability to acquire knowledge” into the code.

Sounds like a tongue twister? Put differently:

Traditional models learn “answers” (like the solution to a specific math problem), while meta-learning learns “methods” (like how to analyze a new math problem).

The Magic of Few-Shot Learning

The most intuitive application of meta-learning is few-shot learning.

Traditional deep learning requires massive data training, while meta-learning enables models to learn new tasks from just a few examples.

It’s like the difference between smart students and average students:

Average students need to do 100 problems to master a pattern. Smart students can look at 3 example problems, summarize the pattern, and solve new problems of the same type.

2025’s GPT-4o and Claude 3.5, to some extent, already possess this meta-learning ability. Give them a few format examples, and they can mimic the output.

Technical Implementation: From MAML to Prototypical Networks

There are several schools of specific meta-learning algorithms.

MAML (Model-Agnostic Meta-Learning): Train an “easy to fine-tune” initialization model. When encountering new tasks, it can adapt with just a few gradient descent steps.

Prototypical Networks: Learn how to map samples of different categories into feature space, making same-class samples cluster together while different-class samples separate.

These technical terms might sound dry. But there’s only one core idea:

Training not the ability for specific tasks, but the ability to quickly adapt to new tasks.

Architecture-Level Evolution: From Single Brain to Layered Systems

The previous three dimensions (model, context, tools) are all improvements on existing architectures. But some researchers believe that to truly achieve self-evolution, the architecture itself must change.

Nested Learning: Nested Learning Architecture

A research trend in 2025 is dividing models into multiple layers.

The bottom layer handles basic perception (recognizing text, images), the middle layer handles reasoning (logical analysis), and the top layer handles planning and decision-making.

This is somewhat like the structure of the human brain: the brainstem manages basic life activities, the limbic system manages emotions and memory, and the cerebral cortex handles advanced cognition.

The Titans framework is an attempt in this direction—adding specialized memory layers between different levels, allowing information to be remembered and retrieved as it flows between layers.

VisPlay: Self-Play Visual Learning Framework

A research team at the University of Illinois did an even more interesting experiment: VisPlay.

They designed a “self-play” framework where a vision-language model simultaneously plays two roles:

One responsible for generating questions (based on images), and another responsible for answering questions (based on images).

The two roles promote each other: the questioner tries to generate difficult questions, and the answerer tries to answer correctly.

The results were amazing:

The Qwen2.5-VL-3B model, after three rounds of self-training, improved its comprehensive score from 30.61 to 47.27. On the hallucination detection task, accuracy soared from 32.81% to 94.95%.

The key point is that the entire process required no manually labeled data. The model taught itself.

It’s like a student without a teacher, through constantly generating and answering questions for themselves, managed to become a top student.

Real-World Applications: Self-Evolution Is Already Here

We’ve talked about a lot of theory, but what’s the actual deployment situation?

AI Phones: From Tools to Partners

Honor’s Magic8 series might be the first AI phone to truly achieve “self-evolution.”

Its YOYO agent isn’t a simple voice assistant, but an intelligent partner that “remembers” your habits.

You say “Help me schedule a meeting with Zhang on Wednesday afternoon and prepare a project proposal,” and it automatically filters your calendar, sends invitations, and generates a proposal framework.

More importantly, it learns. After three months of use, it remembers you prefer handling emails in the morning, remembers your coffee shop preferences, remembers which meeting room you usually use.

The data speaks for itself: scenario coverage expanded from an initial 200 to 3000 in three months. This wasn’t manually added by engineers—it was the result of the model learning and evolving on its own.

AI Agents: From Dialogue to Task Completion

The hottest AI product form in 2025 is undoubtedly Agents.

Manus claims to be “the world’s first general-purpose AI Agent.” Its breakthrough is: instead of chatting in a dialogue box with you, it actually does work for you.

You say “Screen 15 resumes and generate an analysis report,” and it actually extracts files, extracts information, ranks them, and generates reports. The entire process is automated.

Claude 3.5’s Computer Use goes even further, capable of directly controlling your computer. Opening browsers, filling forms, clicking buttons—it’s like having a virtual assistant sitting at your computer.

AutoGLM implements cross-APP operations on phones. You say “Help me book a flight to Shanghai tomorrow and arrange airport pickup,” and it actually opens the booking app, selects flights, pays, then opens the ride-hailing app to book pickup.

The common characteristic of these Agents: they’re not passive Q&A machines, but active task executors. They break down tasks, plan steps, call tools, execute operations, and report results.

This is the evolution from “dialogue interaction” to “task completion.”

Professional Domains: Continuous Learning in Medical Diagnosis

Medical AI might be one of the most valuable deployment scenarios for self-evolution technology.

Medical knowledge updates rapidly, with new diseases, treatments, and drugs constantly emerging. Traditional static models simply can’t keep up.

Continuously learning medical AI can learn from each new case, constantly updating its diagnostic capabilities.

Of course, the unique nature of the medical field brings unique challenges: extremely high error costs and strict regulatory requirements. So actual deployment is more cautious, typically using a “human review + AI learning” hybrid model.

Technical Challenges: The Other Side of the Ideal

We’ve talked about many technical breakthroughs and application scenarios, but we should also discuss the real challenges.

Error Accumulation: The Risk of Learning Wrong

Self-evolution has a fatal risk: if early learning acquires incorrect knowledge, subsequent self-reinforcement will make errors increasingly ingrained.

It’s like a person who formed an incorrect worldview in childhood—in the absence of external correction, this incorrect cognition will become increasingly solidified.

The VisPlay research team mentioned this issue. Their solution is designing some quality control mechanisms, like self-consistency checks and ambiguity dynamic strategy optimization.

But whether these mechanisms can remain effective during long-term self-learning processes still needs more verification.

Computing Resources: A Money-Burning Game

Continuous learning isn’t a free lunch.

Every parameter update, memory retrieval, and model inference requires massive computing power. For individual developers and small companies, the cost pressure is significant.

Not to mention data storage costs. A medium-scale memory system easily requires tens of TB of storage space.

This is also why most successful cases we currently see are products from large companies—they have sufficient resources to invest.

Evaluation Standards: How do we know it evolved?

There’s an even more fundamental question: how to evaluate the effectiveness of self-evolution?

Traditional model evaluation uses fixed test sets. But self-evolving models are constantly changing—the version tested today might already be different from the version tested tomorrow.

More complex is, how do you know whether a model’s change is “evolution” or “degradation”?

It might perform better on one task but worse on another. How to weigh this trade-off?

Currently, the industry doesn’t have a unified evaluation framework. This is also a problem that needs to be solved in the future.

Future Outlook: The Key Path to AGI

Despite the challenges, I remain optimistic about the future of self-evolving AI.

Short-term Trends (2026-2027)

Memory systems will become standard for mainstream models. This trend is already visible and will become more widespread in 2026.

More Agent products will introduce self-evolution capabilities. The transition from passive response to active learning will accelerate next year.

Edge-side AI will take the lead in deploying self-evolution technology. Because edge-side has natural advantages in user data, and privacy protection is easier to implement.

Mid-term Trends (2028-2030)

Architecture-level innovation will have breakthroughs. Layered models, memory-enhanced architectures, and multi-agent systems—these research directions will mature in the coming years.

The combination of symbolism and neural networks will see a revival. Pure data-driven methods have ceilings; fusing knowledge reasoning and symbolic logic might be the breakthrough point.

Evaluation standards and regulatory frameworks will be gradually established. Industry standards, safety specifications, and ethical guidelines—these are necessary conditions for technology popularization.

Long-term Vision (2035)

Self-evolving AI will become a key path to AGI.

A true artificial intelligence shouldn’t just be a static container of human knowledge, but should possess the ability to continuously learn and self-update.

Just like human wisdom doesn’t come from how much knowledge is remembered, but from the ability to learn new knowledge and solve new problems.

By then, AI might truly become our “partner,” not just a “tool.”

Final Thoughts

While writing this article, I asked Claude a question: “Do you think you’re evolving?”

Its answer was interesting: “I update context in every conversation, which is somewhat a form of learning. But true evolution requires changing model parameters, which I can’t do.”

Well, at least it’s honest.

The path of self-evolving AI is still long, with technical challenges, business challenges, and ethical challenges at every turn.

But the direction is right. An AI that only mechanically repeats human knowledge is ultimately just a tool. An AI that can continuously learn and self-evolve might truly become intelligent.

For developers, now is a good time. Memory system frameworks are mature, continuous learning technical paths are becoming clear, and deployment scenarios are increasingly defined.

Start with memory systems to build personalized user experiences. Start with incremental learning to solve knowledge update problems in specific scenarios.

No need to pursue AGI in one step, but every step can make AI a bit more “intelligent.”

This process itself is pretty cool.

FAQ

What is self-evolving AI and how does it differ from traditional AI?

What is catastrophic forgetting and how is it solved?

• Elastic Weight Consolidation (EWC): Protect important parameters

• Experience Replay: Mix historical data for training

• Dynamic Architecture Expansion: Add new modules with LoRA and similar techniques

What are the four technical paths of self-evolving AI?

What are the practical application scenarios for self-evolving AI?

What technical challenges does self-evolving AI face?

How can developers practice self-evolving AI technology?

• Memory Systems: Integrate frameworks like Mem0 to build user profiles

• Incremental Learning: Learn continuous learning techniques like EWC, LoRA

• Meta-Learning: Research Few-Shot Learning scenarios

• Edge Deployment: Leverage user data advantages for personalization

References

- A Survey of Self-Evolving Agents: On Path to Artificial Super Intelligence - Joint paper by 16 teams including Princeton

- MM-Zero: Multi-Modal Zero-Data Self-Evolution - Research by University of Maryland and other institutions

- Agent0: Self-Play Framework for Math Reasoning - Washington University in St. Louis

- VisPlay: Self-Supervised Visual Learning - University of Illinois and others

- Tencent Research Institute: Self-Evolving AI Development Report (2025)

- Roland Berger: Five Trends in China’s Generative AI Market 2025

- Huawei: Intelligent World 2035 Report

- Microsoft Research: Six AI Trends for 2025

16 min read · Published on: Mar 24, 2026 · Modified on: Mar 24, 2026

Related Posts

AI Workflow Automation in Practice: n8n + Agent from Beginner to Master

AI Workflow Automation in Practice: n8n + Agent from Beginner to Master

Multimodal AI Application Development Guide: From Model Selection to Production Deployment

Multimodal AI Application Development Guide: From Model Selection to Production Deployment

Agent Sandbox Guide: A Complete Solution for Safely Running AI Code

Comments

Sign in with GitHub to leave a comment