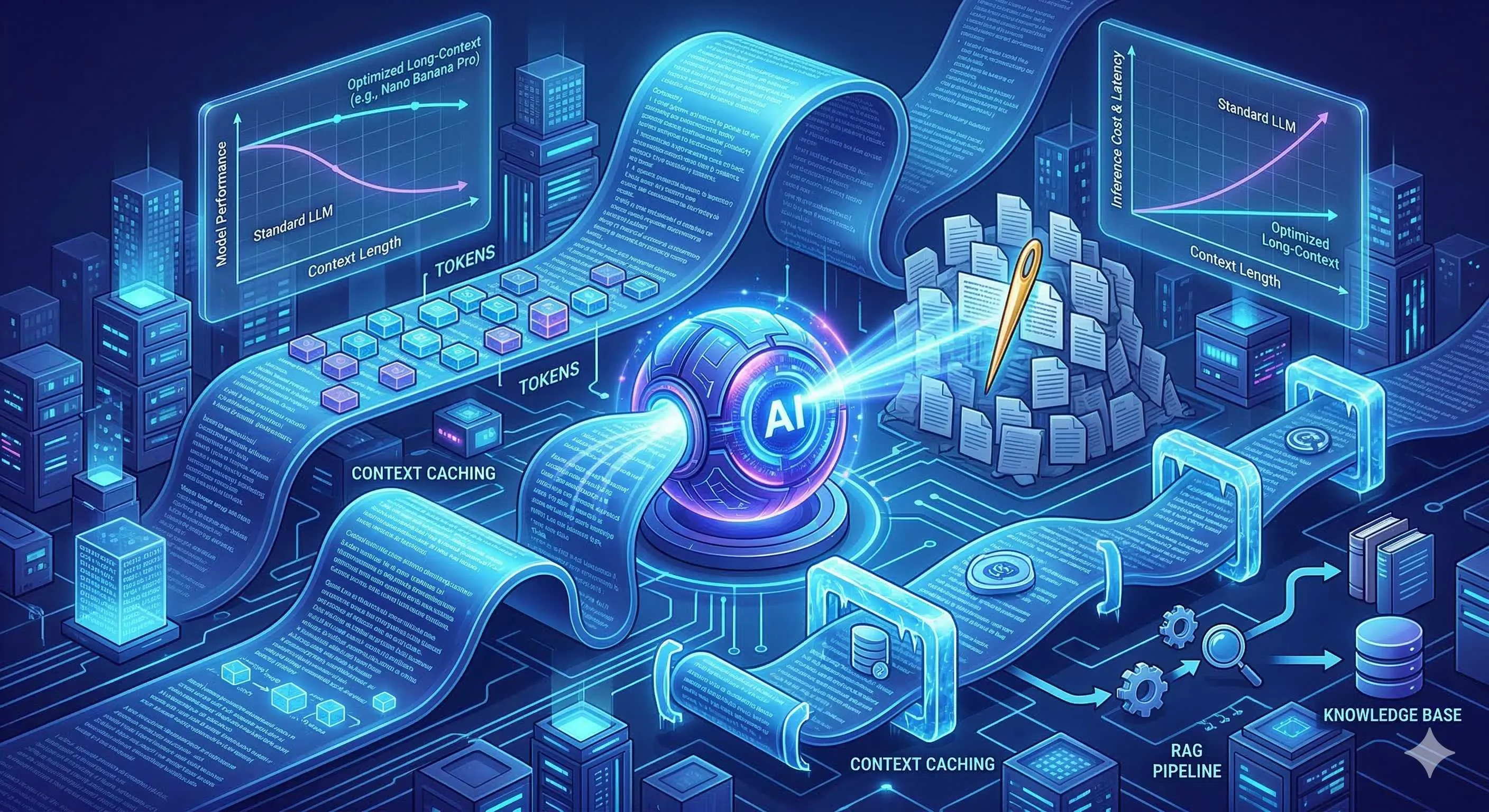

Goodbye Vector Databases? Gemini 2M Token Long Context and Context Caching Performance & Cost Analysis

Honestly, when I first saw the numbers for Gemini 1.5 Pro, I was skeptical.

A 2 million token context window? What does that mean? Roughly equivalent to stuffing in the entire Three-Body Problem trilogy plus several short stories, then telling the AI: “Read all of this, I have some questions.”

As a developer who’s been working with RAG systems for nearly two years, my first reaction wasn’t excitement—it was caution. Is this another technology with impressive lab numbers but disappointing real-world performance? More importantly—if the vector database + embedding + reranking pipeline I spent months building could be replaced by Google saying “just throw it all to me,” what does that make me?

With this complex mindset, I decided to test it myself.

Gemini Long Context Capability Overview

Evolution from 1.5 Pro to 3.1 Pro

Let’s briefly review Gemini’s long context evolution.

Early 2024: Gemini 1.5 Pro launched, shocking the industry with a 1 million token context window. Months later, it upgraded to 2 million tokens. At the time, Claude 3 was hovering around 200K tokens, and GPT-4 Turbo was at 128K.

Honestly, this gap was staggering. Like everyone was racing bicycles when suddenly someone drove in a sports car.

Later Gemini 2.0 and 2.5 series continued deepening this direction, while the latest Gemini 3.1 Pro “shrunk” the window back to 1 million tokens but made qualitative improvements in reasoning quality and multimodal understanding. Google’s explanation: rather than blindly chasing numbers, focus on quality first.

I actually agree with this approach. After all, being able to fit an entire book but not understand it, versus precisely comprehending core content with slightly smaller capacity—the latter is obviously more practical.

How Much Content Can 2 Million Tokens Actually Hold?

Many people have no concept of these numbers. Let me convert them:

- Approximately 1.5 million English words, or 3 million Chinese characters

- Roughly the entire Harry Potter series (7 books)

- About 10 years of technical blog posts

- Or all source code (with comments) of a medium-sized Python project

In other words, most enterprise internal knowledge bases can be stuffed in at once.

Take my situation as an example. Our company Wiki, technical docs, product requirements, meeting notes—altogether just hundreds of thousands of words. Previously, I’d have to slice them, vectorize, build indexes, and worry about retrieval quality. Now? Just throw them all to Gemini and be done.

This feeling, how to describe it, is like switching from manual to automatic transmission. Initially worried about losing control, but once you get used to it, you can’t go back.

Multimodal Long Context: More Than Text

Gemini also has an easily overlooked advantage: its long context is multimodal.

What does this mean? You can simultaneously throw in an hour of video, dozens of PDF pages, several charts, and ask: “What contradictions exist between the data in this video and the statistics on page 15 of the PDF?”

This kind of cross-modal correlation analysis is difficult for traditional RAG. How do you segment video? How do you vectorize charts? These aren’t simple problems.

I previously tested with a project containing product demo videos, user feedback tables, and design drafts. Gemini not only accurately answered questions about video content but also pointed out conflicts between certain design decisions and user feedback. This global comprehension ability is truly impressive.

”Needle in a Haystack” Test: The Truth About Gemini’s Recall Rate

What is the Needle In A Haystack Test

At this point, you might ask: large capacity is one thing, but can it actually remember?

This was my biggest concern too. After all, who wants to pay big money for AI to read 2 million words only to remember the last few paragraphs.

The industry has a specific test method called “Needle In A Haystack.” The principle is simple: hide a specific sentence (like “my favorite color is purple”) in an extremely long text, mix this text with other irrelevant content, then ask the AI what that specific sentence was.

If the AI answers accurately, it means it truly “found” the needle in that long text. Testing repeats at different lengths and positions, ultimately producing a recall rate curve.

Interpreting Gemini 1.5 Pro Official Data

Google’s published data is quite impressive:

- At 530K token tests: 100% recall

- At 1M token tests: 99.7% recall

- Even at extreme 10M token tests: maintains 99.2% accuracy

Frankly, seeing these numbers for the first time, I was half-convinced. Official data, you know, often comes from optimal conditions.

But then I saw third-party evaluation agency results, and the data basically matched. Artificial Analysis’s independent tests showed Gemini 1.5 Pro maintains extremely high recall stability across various document lengths, especially in the middle sections of documents, clearly outperforming other models.

This was somewhat surprising. Because some long context models I’ve used before often have a “middle forgetting” problem—remembering the beginning and end well, but blurring the middle. Gemini seems to have solved this well.

Real-World Business Scenario Recall Performance

However, lab data is lab data; real business scenarios are another matter.

I designed a test closer to reality: take a 500K-word technical document set containing API docs, architecture designs, troubleshooting manuals, and other content types. Hide several specific configuration parameters in different positions across documents, then have Gemini answer related questions.

Results?

Honestly, most of the time it found them. But I also discovered some interesting details:

- For clear, structured information (like “what’s the API key validity period”), recall rate is nearly 100%

- But for questions requiring some reasoning (like “what security risks exist in this design based on documentation”), accuracy drops to around 80%

- If questions involve cross-information from multiple documents, Gemini sometimes misses one source

What does this mean? Gemini’s long context capability is indeed powerful, but it’s not omnipotent. Especially when questions require complex reasoning rather than simple retrieval, there’s still room for optimization.

Context Caching Deep Dive

Why Context Caching is Needed

Alright, now we know Gemini can hold content and remember it. But there’s another key question: cost.

2 million tokens sounds great, but if every conversation requires resending all 2 million tokens, the bill might make you cry.

At Gemini 1.5 Pro pricing (February 2026 data), inputs over 128K cost $2.50/million tokens. That means one 2M token request costs $5 just for input. If you have 100 queries a day, that’s $500.

This cost is unacceptable for most applications.

This is where Context Caching comes in.

How Context Caching Works

Simply put, Context Caching allows you to preload and cache contexts that are reused repeatedly. Subsequent queries only need to pass the new question and cache ID, without resending those millions of tokens of background material.

The specific flow is:

- First request: you send documents to Gemini and request cache creation

- Gemini returns a cache ID and preserves these token states on the server

- Subsequent queries: you only pass cache ID + new question

- Billing: only charged for new question tokens + small cache maintenance fee

The key is cached hit tokens are charged at only 10% of original price. So originally $5 input cost, now just $0.50.

When I first understood this mechanism, it was a revelation. So Google had already thought about cost issues and provided quite an elegant solution.

Implicit vs Explicit Caching

Gemini offers two caching modes:

Explicit Caching: You actively call the API to create cache, specifying what content to cache, setting TTL (time to live), and other parameters. This method offers the most control, suitable for datasets with clear boundaries like knowledge bases or code repositories.

Implicit Caching: Launched after May 2025, the system automatically detects repeated token prefixes and caches them without any action from you. This feature is on by default and is a blessing for development experience.

However, note that Implicit Caching has certain trigger conditions, usually requiring identical prompt prefixes to reach a certain length before taking effect. If your contexts vary greatly between requests, you might not benefit from this.

Cache Hit Rate Impact on Cost

Let’s do some simple math.

Assume your knowledge base is 1M tokens, with 1000 daily queries:

Without caching:

- Daily input tokens: 1,000,000 × 1000 = 1 billion tokens

- Cost: 1B ÷ 1M × $2.50 = $2500/day

With Context Caching:

- Initial load: $2.50 (one-time)

- Cache maintenance: $1.00/million tokens/hour × 1M tokens × 24 hours = $24/day

- Subsequent query input: at 10%, i.e., $0.25/million tokens

- Daily query cost: 1000 × 500 tokens × $0.25/million tokens ≈ $0.125/day

- Total: ~$25/day

See that? Cost drops from $2500/day to $25/day—a 100x difference.

That’s the power of Context Caching. Honestly, after calculating this, I started seriously considering migrating some projects from RAG.

Cost Showdown: Long Context vs RAG

Gemini API Pricing Full Analysis (2026 Latest)

To make an accurate comparison, let’s first review Gemini’s latest pricing (as of February 2026):

| Model | Context Window | ≤128K Input | >128K Input | Output |

|---|---|---|---|---|

| Gemini 1.5 Pro | 2M | $1.25/MTok | $2.50/MTok | $5.00/MTok |

| Gemini 1.5 Flash | 1M | $0.075/MTok | $0.15/MTok | $0.60/MTok |

| Gemini 2.5 Pro | 2M | $1.25/MTok | $2.50/MTok | $10.00/MTok |

Note: MTok = Million Tokens

Additionally, Context Caching fee structure:

- Cache storage: $1.00/million tokens/hour

- Cache hit: 10% of original input price

- Cache miss: charged at normal rates

Hidden Costs of RAG Systems

Now let’s calculate RAG solution costs. On the surface, RAG only needs embedding fees and vector database fees, seemingly cheap. But actually, there are many hidden costs.

Visible costs:

- Embedding API: text-embedding-3-large at $0.13/million tokens

- Vector database: Pinecone Standard ~$70/month, or self-hosted server costs

- LLM generation fees: depends on your model

Hidden costs:

- Development maintenance costs: building and maintaining RAG pipelines requires engineer time

- Retrieval quality tuning: chunk size, overlap, reranking strategies—all need repeated experimentation

- Latency issues: retrieval + generation is two-step operation, longer response times

- Recall rate loss: even the best RAG sometimes fails to retrieve relevant content

I once spent two full weeks tuning a RAG system on a project—adjusting chunk sizes, swapping embedding models, trying various reranking strategies. Final recall improved from 75% to 85%, but still not perfect.

The human cost of these two weeks, converted to money, might exceed a year’s API fees.

Finding the Tipping Point: When to Switch?

So much talk, but when should you choose long context + Context Caching versus sticking with RAG?

I’ve drawn a decision diagram to help you quickly judge:

Scenarios suited for long context:

- Document totals under 2M tokens (~3000 PDF pages)

- High query frequency (hundreds+ per day)

- Need cross-document correlation analysis

- Some tolerance for latency (actually faster than RAG)

- Don’t want to maintain complex retrieval infrastructure

Scenarios suited for RAG:

- Massive document totals (tens of millions+ tokens)

- Very low query frequency (a few to dozens per day)

- Need precise fragment-level citation and溯源

- Extremely cost-sensitive with frequent document updates

- Already have mature RAG infrastructure

For example, a customer service knowledge base system with 500K words of documents and 500 daily queries costs about $50/month with long context + Context Caching; while a RAG solution, plus vector database and development maintenance costs, might actually be more expensive.

But if you’re a legal document platform with hundreds of millions of words of documents, RAG is still the better choice.

Practical Guide: Context Caching Integration

Prerequisites and Limitations

If you decide to try Context Caching, first check these prerequisites:

- Model version: Need Gemini 1.5 Pro or above

- Token count: Cached content needs at least 32,768 tokens (below this isn’t cost-effective)

- Validity period: Cache defaults to maximum 1 hour save time, can be renewed

- Region restrictions: Some regions may not support yet, check official documentation

Complete Python SDK Example

Here’s complete integration code you can use directly:

import google.generativeai as genai

from google.generativeai import caching

import datetime

# Configure API Key

genai.configure(api_key="YOUR_API_KEY")

# Prepare content to cache

# Assume you have a large document

document_content = """

[Your long document content, at least 32768 tokens]

"""

# Create cache

cache = caching.CachedContent.create(

model='gemini-1.5-pro-002',

display_name='knowledge_base_cache',

system_instruction='You are a professional technical assistant, answering based on provided technical documents.',

contents=[document_content],

ttl=datetime.timedelta(hours=1), # Cache 1 hour

)

print(f"Cache created, ID: {cache.name}")

print(f"Token count: {cache.usage_metadata.total_token_count}")

# Use cache for conversation

model = genai.GenerativeModel.from_cached_content(cached_content=cache)

# Subsequent queries only need to pass question, no need to resend documents

response = model.generate_content("What is the API rate limiting strategy mentioned in the document?")

print(response.text)

# Extend cache validity

cache.update(ttl=datetime.timedelta(hours=2))

# Remember to delete when done (or wait for automatic expiration)

# cache.delete()Cache Lifecycle Management

In practical applications, you need to consider cache lifecycle management:

Creation timing: Usually preload commonly used documents at application startup, or create on-demand when users upload documents.

Renewal strategy: If cache is about to expire but there are still active queries, can automatically renew in background.

Cleanup strategy: For caches no longer in use, delete promptly to save costs.

# List all caches

caches = caching.CachedContent.list()

for c in caches:

print(f"{c.display_name}: {c.name} ({int(c.expire_time.timestamp() - datetime.datetime.now().timestamp())} seconds remaining)")

# Batch cleanup expired caches

now = datetime.datetime.now()

for c in caches:

if c.expire_time < now + datetime.timedelta(minutes=5):

c.delete()

print(f"Deleted cache: {c.display_name}")Common Pitfalls and Solutions

Pitfall 1: Cache miss still charges

Sometimes you think you’re using cache but the bill doesn’t decrease. This might be wrong cache ID passed, or cache expired. Recommend adding logs to confirm.

Pitfall 2: Token count inaccurate

Cached token count must be ≥32768, but your calculation might differ from Google’s. Recommend using SDK-provided usage_metadata to confirm.

Pitfall 3: Concurrency issues

Multiple requests using the same cache ID is fine, but pay attention to atomicity of cache renewal.

Pitfall 4: Content updates

If original documents update, cache won’t auto-refresh. You need to manually delete old cache and create new.

Architecture Decision: Is RAG Dead or Coexisting?

Limitations of Long Context

Said so much good about Gemini, time for some cold water.

Long context isn’t a panacea; it has obvious limitations:

Cost ceiling: Although Context Caching reduces per-query costs, if document volume is massive (tens of millions+ tokens), cache maintenance fees themselves aren’t cheap.

Update frequency: If your documents change frequently, caches need frequent rebuilding, diminishing advantages.

Precise citation: RAG can precisely tell users “the answer comes from page X paragraph Y,” while in long context mode, this溯源 is more difficult.

Multi-tenant isolation: In multi-user scenarios, each user might need independent context, making cache management complex.

Scenarios Where RAG Remains Irreplaceable

Admit it, some scenarios are still better suited for RAG:

- Massive document retrieval: When document scale reaches TB level, only vector databases can handle efficiently

- High real-time update requirements: News, stock information, etc. needing minute-level updates

- Hybrid search needs: Complex queries combining keywords, tags, time, and other multi-dimensional filtering

- Existing mature infrastructure: If your RAG system is already stable, no need to rebuild for the new shiny

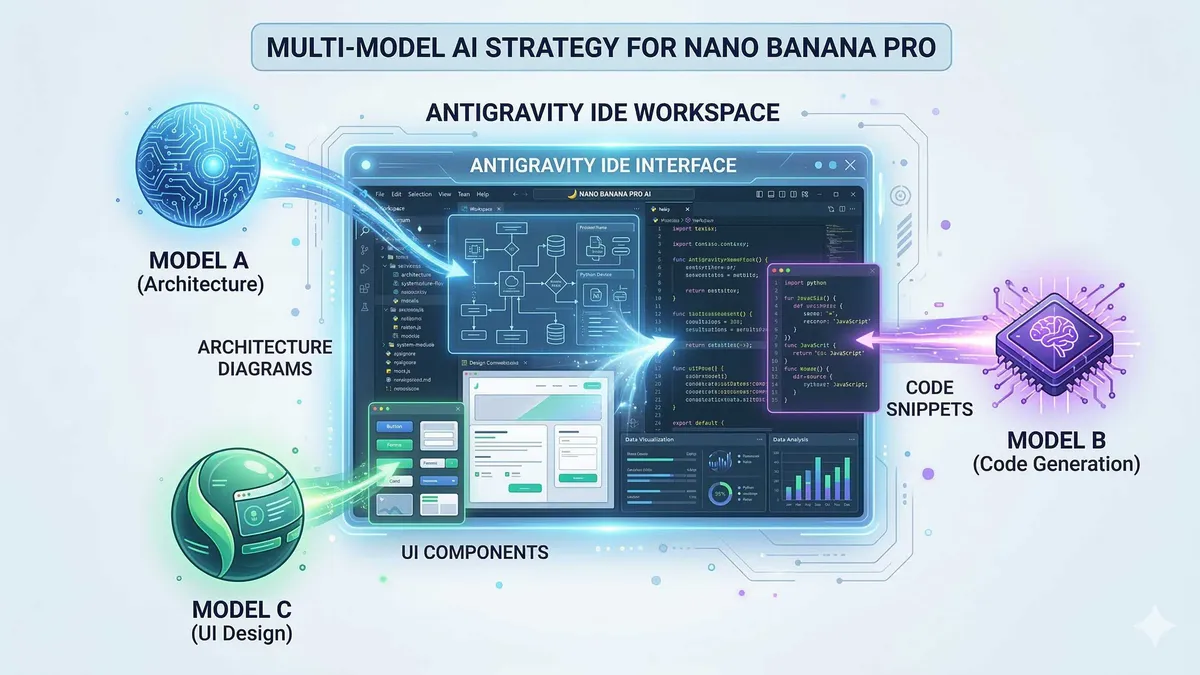

Possibility of Hybrid Architecture

Actually, long context and RAG aren’t mutually exclusive. Smart developers are already exploring hybrid architectures:

First layer filtering: Use vector retrieval to narrow down, finding most relevant few hundred documents

Second layer deep reading: Stuff these documents into Gemini’s long context for deep analysis

This avoids direct processing of massive documents while retaining long context comprehension depth.

I’ve been trying this architecture myself recently, and results are surprisingly good. RAG handles “coarse screening,” Gemini handles “deep reading”—each plays to its strengths.

My Recommended Decision Tree

If you’re still纠结 after reading this article, refer to this simple decision tree:

What's your total document volume?

├── < 2M tokens → Use long context + Context Caching directly

└── > 2M tokens → Need RAG?

├── Need precise fragment citation → Use RAG

├── Documents update very frequently → Use RAG

└── Otherwise → Consider hybrid architecture (RAG coarse filter + long context deep reading)Future Outlook and Conclusion

Development Trends in Long Context Technology

Standing at the beginning of 2026 looking back, the pace of long context technology development is staggering.

Gemini has pushed windows to tens of millions of token levels, with Claude close behind. It’s foreseeable that context windows will continue expanding and costs will continue dropping.

More importantly, models’ “effective memory” capabilities are constantly improving. Early long context models often “could hold but not remember”; now Gemini can “both hold and remember firmly.”

I estimate that in another year or two, “documents too large to fit” might fade into history like “insufficient memory” did.

Action Advice for RAG Developers

If you’re a RAG developer like me, facing this wave, I have some advice:

First, don’t panic. RAG won’t be completely replaced; it’s just finding more suitable positioning. Like relational databases weren’t killed by NoSQL, the two will coexist long-term.

Second, embrace change. Try migrating some small projects to long context solutions, experience the differences firsthand. Only by doing it yourself can you make correct technical judgments.

Third, focus on hybrid architecture. This might be the optimal solution for the foreseeable future—both RAG scalability and long context comprehension depth.

Fourth, calculate the cost账. Don’t be blinded by the “new technology”光环, nor stick to “old solution” comfort zones. Let data speak—whatever is cheaper and better, use that.

Honestly, after writing this article, my attitude toward Gemini shifted from initial skepticism to cautious optimism. It’s not a silver bullet, but it is indeed more elegant and economical than traditional RAG in certain scenarios.

Technology’s value lies not in newness or oldness, but in whether it solves real problems. I hope this article helps you make wise choices between long context and RAG.

If you have questions or want to share your实践经验, feel free to comment. After all, in this rapidly changing field, we’re all still learning.

FAQ

How much content can Gemini's 2 million token long context actually process?

How does Context Caching reduce costs?

When should you choose RAG over long context?

How does hybrid architecture work?

15 min read · Published on: Feb 27, 2026 · Modified on: Mar 18, 2026

Related Posts

OpenClaw 2026.3 Advanced Practice: Core Features and Best Practices

OpenClaw 2026.3 Advanced Practice: Core Features and Best Practices

OpenClaw Practical Guide: From Beginner to Master

OpenClaw Practical Guide: From Beginner to Master

Don't Be a Prisoner of a Single Model: Flexibly Switching Between Gemini 3, Claude 4.5, and GPT-OSS in Antigravity

Comments

Sign in with GitHub to leave a comment